In the latest post in his ‘Spring Batch’ series, Jeremy Yearron runs through some best practice for splitting data batch jobs into smaller steps.

Spring Batch in smaller steps

Spring Batch in smaller steps

When designing a batch job, it is not always straightforward to know how to split the job into a set of steps, or sometimes it seems quite easy but you end up with a situation that seems good at first but ends up working against you.

Let’s take an example: you need to create a job that will process a number of zip files (about 1GB in size) that each contain about 100 XML files. The files are to be downloaded from a website. The XML files are to be parsed, the relevant data extracted, and written to a CSV file to be imported into a database table.

This example is based on a real-world requirement for a job I had a few years ago.

It would be possible to create a job that meets these requirements which only has one step, by identifying a file, downloading it and processing it before doing the same for the next file on the web site. However, there is a risk that the web session will time out between downloading one file and trying to download the next one. It would be better to download all the files and then process them one by one.

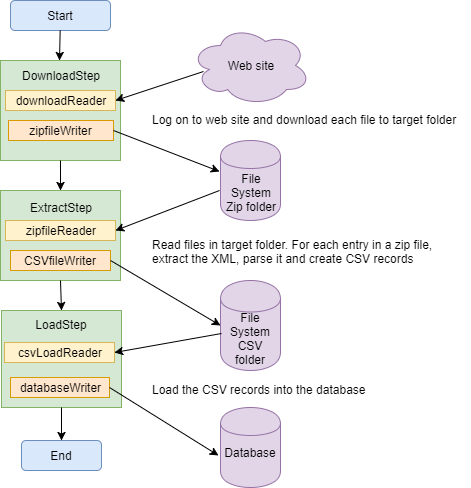

So, we could have a job like this:

This means that we shouldn’t have to worry about session timeout. This is better, but what if the list of files on the web site is quite volatile? If you have a connection problem when downloading one of the files, there might be additional files on the page when you come to restart the job, so you retrieve more files than you originally expected. This might be a problem for you, or it might not.

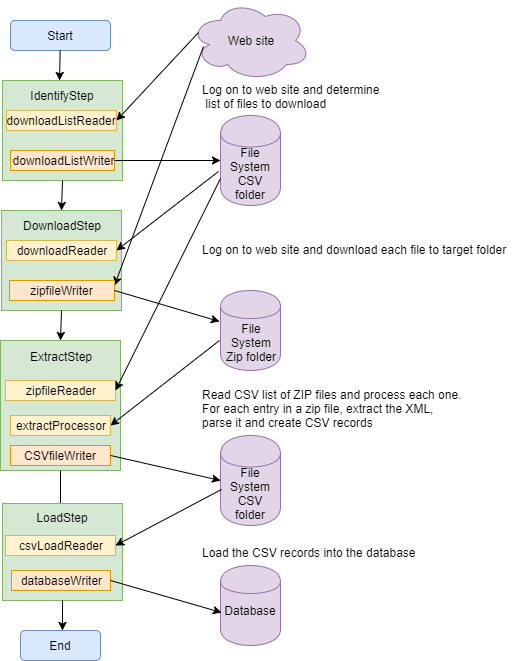

An option would be to break the download into smaller steps:

So now we get a snapshot of the files we will be processing at the start of the job and then process them. By using the list of files to be downloaded to identify the files to be extracted, we can be sure that all of the expected data has been processed. The job will fail if any file is accidentally moved out of the target folder, and will ignore any file in the target folder that was not previously downloaded as part of this job run.

So far, so good. The download part of the job is better than before, but what about the extract step?

The Zip files are quite large – about 1GB. To identify each XML file within a zip file, extract the data, parse it and create CSV records is a fair amount of work. If you are using a framework like Spring Batch, this will help you track the processing of each zip file, but not for each XML file. If the job fails parsing an XML file, when you come to restart the job, it could be difficult to resume processing from the XML file that failed – you would have to manually keep track of which XML files had been processed, and identify the XML file in the zip to start reading from.

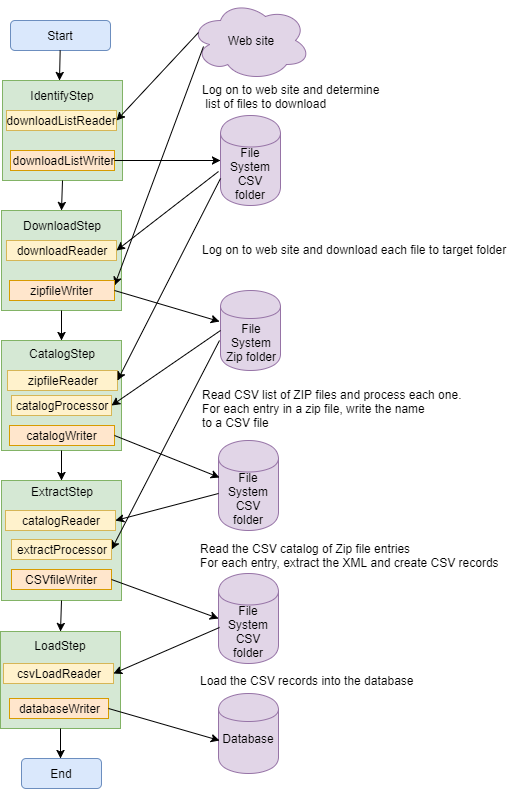

A better way would be to have a step to catalog the XML files in each zip file in another CSV file, containing the zip file name and the entry name. Then the extract step will be taking each XML file in turn and be able to track the progress at that level. A helper class can be used to hold the current position within the current zip file and read forward to find the next entry, or switch to a different zip file if the CSV record is for the next zip file.

Now, if the job fails parsing an XML file, we can restart the job, knowing which XML file is to be processed first.

The downside to using a catalog file is that you are reading each zip file twice, but I find that the extra time spent is worth it, as you have more fine grained control over the extract processing.

Conclusion

I have found that with this example scenario, as with many others, that it pays dividends to take some time to break a job down into a number of small steps, rather than a few bigger steps.

I recommend that you try to do this whenever possible.

Useful Links

Jeremy’s Spring Batch posts (Season 1)

Lots of data? Multiple batches? Sounds like a job for Spring Batch.

Log the record count during processing

How to read list of lists (part I)

How to read list of lists (part II)

Plus..

Hi I have a similar kind of scenario where my step 1 tasklet will copy the xml files to a folder and then step 2 will have a multi resource reader ,processor,writer. The issue I am facing is that the reader is giving an exception because it is finding the resource to be null though the resource is being created and I am able to get it’s value in step 2. Still don’t know why resource is null. For further details-

https://stackoverflow.com/questions/52881149/spring-batch-pass-value-of-resource-from-step-1-to-the-next-step

Hi Sourav,

Looking at the stackoverflow question, it looks like the MultiResourceItemReader is being created at the start of the job, before the files have been copied over from the shared drive. At this point, the folder containing the copied files won’t exist.

I would configure step 2 so that the reader is only created at the start of that step. Try something like this:

@StepScope

@Bean

public MultiResourceItemReader multiResourceItemReader(@Value(“#{stepExecutionContext[‘someKey’]}”)

…

using the parameter value to construct the MultiResourceItemReader.

This way, the reader will only be created once the “someKey” value has been stored in the context and the files have been copied into the appropriate folder.

hi Jeremy

I have same requirement as of yours.

{Let’s take an example: you need to create a job that will process a number of zip files (about 1GB in size) that each contain about 100 XML files. The files are to be downloaded from a website. The XML files are to be parsed, the relevant data extracted, and written to a CSV file to be imported into a database table.}

But I am completely new to spring batch. Would you please give me some reference to develop the same. It would be a big help. Thanks

Hi Namit,

If you’re new to Spring Batch, I suggest you look at one of the tutorials on the web. There are a number of them. To create the job you want is not straightforward. I have defined the steps you need in the blog post above, but here is the code for the helper class I use for reading the list of entries in the zip files:

package my.package;

import java.io.BufferedInputStream;

import java.io.FileInputStream;

import java.io.FileNotFoundException;

import java.io.IOException;

import java.util.zip.ZipEntry;

import java.util.zip.ZipInputStream;

import my.package.ZipFileEntry;

/**

* Manage the reading of entries in a Zip file.

*/

public class ZipHandler {

private String fileName;

private ZipInputStream zis;

/**

* If the file name in a ZipFileEntry object is different from that of the last call,

* close the existing open Zip file, if one is currently open, and get an InputStream to read the new file.

*

* @param zipFileEntry

* @throws IOException

*/

public void openZipFile(final ZipFileEntry zipFileEntry) throws IOException {

if (!zipFileEntry.getFileName().equals(fileName)) {

close();

FileInputStream in = new FileInputStream(zipFileEntry.getFileName());

zis = new ZipInputStream(new BufferedInputStream(in));

fileName = zipFileEntry.getFileName();

}

}

/**

* Close a Zip InputStream if one is currently open.

*

* @throws IOException

*/

public void close() throws IOException {

if (fileName != null) {

zis.close();

fileName = null;

zis = null;

}

}

/**

* Obtain an InputStream positioned to read the entry in a zip file

* whose file name and entry name match the details in the zipFileEntry parameter.

*

* @param zipFileEntry

* @return

* @throws IOException

* @throws FileNotFoundException if no matching entry can be found.

*/

public ZipInputStream getEntityInputStream(ZipFileEntry zipFileEntry) throws IOException, FileNotFoundException {

openZipFile(zipFileEntry);

ZipEntry entry;

ZipInputStream in = null;

// Find entry in the Zip by reading forward until the entry name is found

while((entry = zis.getNextEntry()) != null) {

if (zipFileEntry.getEntryName().equals(entry.getName())) {

in = zis;

break;

}

}

if (in != null) {

return in;

} else {

throw new FileNotFoundException(“Entry ” + zipFileEntry.getEntryName() + ” not found in ” + zipFileEntry.getFileName());

}

}

/**

* Determine whether there is a Zip InputStream currently open and being managed.

*

* @return true if a Zip file is open, otherwise false.

*/

public boolean isOpen() {

return zis != null;

}

}

This requires a POJO called ZipFileEntry that has two properties – fileName and entryName.

Your job needs to create a CSV file containing the fileName and entryName for each XML file you need to process. Use a FlatFileItemReader to read this file, and call the getEntityInputStream method in the helper class to get an InputStream to read the XML for that entry. Don’t forget to call the close method after processing the last XML file.

Hi Jeremy,

I found this article very interesting, especially the comment about reading a file twice. I am wondering if it makes sense for me and would appreciate your thoughts.

I have a process that needs to check that a header(first line) and footer(last line) exist, and also that the number or records in between the header and footer equals the count that is on both the header and footer rows. Once these validations are complete I can then process the file with the appropriate business logic.

This file could be very large (over 100K lines) so I am concerned about processing time. However, I don’t want to process the file once and then discover that header and footer are not correct, which would seem to be a waste of processing time.

Do you think this is a good case for processing the file twice?

Hi Steve,

In your situation I would try to process the file once, and write the output of your step to a temporary CSV file. Then, if the header and footer are correct, read that new CSV file in the next step and use it, i.e. import it into a database, or perform other updates that you need.

If the header and footer are not correct you can stop the job or take whatever other action is appropriate in your situation.

Sorry for this late comment.

I really appreciate this kind of tutorial with a real exemple and thinking.

Nevertheless I have a question about the first step (chunk orientés). What do the downloadReader and zipFileWriter in the 1st example (schema)? I would have thought of a simple tasklet that download a file in the target directory…

Hi Benjamin,

If you were only ever going to download a single file in the first step, then a tasklet would be a good solution. However, if there could be multiple files, then a reader/writer step will allow you to process each file and have a record of how many files were downloaded.

In the real-world example that the blog post is based on, there were 2 new files to be downloaded each week. Sorry, I didn’t make that clear.

Thank you Jeremy. It is now much clearer.

So I guess the reader would simply retrieve files URL and the writer would effectively do the download to the ZIP target folder, right?

That’s exactly how I would do it.

Hi (again) Jeremy,

I am sorry but, again, I have a question about the 2nd step of the 1st batch diagram.

There is one reader (zipfileReader) and one writer (CSVfileWriter).

– On the 2nd batch diagram, there is also a processor (extractProcessor). Haven’t you forget this processor on the 1st diagram?

In this step there are quite a few things to do : loop over the (downloaded) zip files, loop over entries of each zip file, extract the entry, parse it, and create CSV records :

– Which ones are part of the reader? I would say at least the loop over zip files and entries of each zip file (maybe through some kind of reader with delegate).

– Which ones are part of the prcessor (if any)? The entry extraction + parsing of it (but if it was in a reader, StaxEventItemReader could be used)

– The writer consists in creating the CSV (appending in a file maybe)?

Note : in your ZipHandler class I cannot see a method to list all the entries of a zip file. It was done somewhere else I guess?

Thank you very much

Hi Benjamin,

Yes, I forgot the processor in the extract step in the first diagram. Sorry if that caused any confusion.

I would have the reader read the entries in the zip files. It would have to store which zip files it had processed in the step execution context. The reader would pass an InputStream to a processor, which would extract the XML, parse it and create the objects to be written to the CSV file. The writer would just output the objects to the CSV file.

Having said that, I would not actually implement this version of the job – there is too much for the reader and processor to do. I described it as a theoretical stepping stone to get to the job described in the final diagram.

The list of entries in a zip was retrieved by using a ZipInputStream to read the zip file and calling the getNextEntry() method until null is returned. It could have gone in the ZipHandler class, but I have used that when needing to locate a particular entry in a particular zip file.

Jeremy

Thank you very much Jeremy for this clarification!

I agree with you that the 1st version requires too much to do.

Best regards ad thanks again